Kubernetes Containerd简化搭建 kubeadm安装准备

详细搭建可以参考《Kubernetes 完全搭建》文档,本篇主要为了快速搭建,将很多坑提前避免掉

环境要求

一台兼容的 Linux 主机。Kubernetes 项目为基于 Debian 和 Red Hat 的 Linux 发行版以及一些不提供包管理器的发行版提供通用的指令

每台机器 2 GB 或更多的 RAM (如果少于这个数字将会影响你应用的运行内存)

2 CPU 核或更多

集群中的所有机器的网络彼此均能相互连接(公网和内网都可以)

节点之中不可以有重复的主机名、MAC 地址或 product_uuid。请参见这里 了解更多详细信息。

开启机器上的某些端口。请参见这里 了解更多详细信息。

禁用交换分区。为了保证 kubelet 正常工作,你 必须 禁用交换分区。

部署角色

IP

角色

安装软件

192.168.245.151

master

kube-apiserver

192.168.245.152

node1

kubelet

192.168.245.153

node2

kubelet

准备工作

以下所有操作,在三台节点全部执行

关闭防火墙

先将所有节点的防火墙关闭

1 2 3 4 5 6 # 关闭运行的防火墙 systemctl stop firewalld.service # 禁止firewall开机启动 systemctl disable firewalld.service # 查看默认防火墙状态(关闭后显示notrunning,开启后显示running) systemctl status firewalld.service

修改hostname

其他节点hostname都需要更改

1 2 3 hostnamectl set-hostname master #192.168.245.151主机输入命令 hostnamectl set-hostname node01 #192.168.245.152主机输入命令 hostnamectl set-hostname node02 #192.168.245.153主机输入命令

修改host文件

修改其他节点的hostname后,将域名绑定到hostname中保证其他节点能够通过hostname进行访问其他节点

1 2 3 4 5 cat >> /etc/hosts << EOF 192.168.245.151 master 192.168.245.152 node01 192.168.245.153 node02 EOF

关闭防火墙 1 2 systemctl stop firewalld && systemctl disable firewalld systemctl stop NetworkManager && systemctl disable NetworkManager

关闭selinux 1 2 setenforce 0 sed -i s/SELINUX=enforcing/SELINUX=disabled/ /etc/selinux/config

关闭swap 1 2 swapoff -a sed -ri 's/.*swap.*/#&/' /etc/fstab

安装工具包

安装其他必要组件和常用工具包

1 2 yum install -y chrony yum-utils zlib zlib-devel openssl openssl-devel \ net-tools vim wget lsof unzip zip bind-utils lrzsz telnet

时间同步 1 2 systemctl enable chronyd --now chronyc sources

安装生效ipvs 1 2 3 4 5 6 7 8 9 10 11 12 cat > /etc/sysconfig/modules/ipvs.modules <<EOF # !/bin/bash modprobe -- ip_vs modprobe -- ip_vs_rr modprobe -- ip_vs_wrr modprobe -- ip_vs_sh modprobe -- nf_conntrack_ipv4 EOF chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep -e ip_vs -e nf_conntrack_ipv4 yum install -y ipset ipvsadm

使用说明

使用描述上面脚本创建了的/etc/sysconfig/modules/ipvs.modules文件,保证在节点重启后能自动加载所需模块,使用lsmod | grep -e ip_vs -e nf_conntrack_ipv4命令查看是否已经正确加载所需的内核模块;

要确保各个节点上已经安装了 ipset 软件包,因此需要:yum install ipset -y

为了便于查看 ipvs 的代理规则,最好安装一下管理工具 ipvsadm:yum install ipvsadm -y

配置ipvs

将桥接的IPv4流量传递到iptables的链

1 2 3 4 5 6 7 modprobe br_netfilter cat > /etc/sysctl.d/k8s.conf << EOF net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 net.ipv4.ip_forward = 1 EOF sysctl --system

参数说明

bridge-nf 使得 netfilter 可以对 Linux 网桥上的 IPv4/ARP/IPv6 包过滤,常用的选项包括

net.bridge.bridge-nf-call-arptables:是否在 arptables 的 FORWARD 中过滤网桥的 ARP 包

net.bridge.bridge-nf-call-ip6tables:是否在 ip6tables 链中过滤 IPv6 包

net.bridge.bridge-nf-call-iptables:是否在 iptables 链中过滤 IPv4 包

net.bridge.bridge-nf-filter-vlan-tagged:是否在 iptables/arptables 中过滤打了 vlan 标签的包。

安装 containerd 执行安装命令

下面的脚本就是安装containerd的

1 2 3 4 5 6 7 8 9 10 11 12 13 cd /root/ # 注意需要是使用root用户进行安装 yum install libseccomp -y wget https://download.fastgit.org/containerd/containerd/releases/download/v1.5.5/cri-containerd-cni-1.5.5-linux-amd64.tar.gz tar -C / -xzf cri-containerd-cni-1.5.5-linux-amd64.tar.gz echo "export PATH=$PATH:/usr/local/bin:/usr/local/sbin" >> ~/.bashrc source ~/.bashrc mkdir -p /etc/containerd systemctl enable containerd --now ctr version

载安装完成containerd之后,执行ctr version将出现如下界面

配置containerd

以下是完整的配置文件

1 vi /etc/containerd/config.toml

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 disabled_plugins = []imports = []oom_score = 0 plugin_dir = "" required_plugins = []root = "/var/lib/containerd" state = "/run/containerd" version = 2 [cgroup ] path = "" [debug ] address = "" format = "" gid = 0 level = "" uid = 0 [grpc ] address = "/run/containerd/containerd.sock" gid = 0 max_recv_message_size = 16777216 max_send_message_size = 16777216 tcp_address = "" tcp_tls_cert = "" tcp_tls_key = "" uid = 0 [metrics ] address = "" grpc_histogram = false [plugins ] [plugins."io.containerd.gc.v1.scheduler" ] deletion_threshold = 0 mutation_threshold = 100 pause_threshold = 0.02 schedule_delay = "0s" startup_delay = "100ms" [plugins."io.containerd.grpc.v1.cri" ] disable_apparmor = false disable_cgroup = false disable_hugetlb_controller = true disable_proc_mount = false disable_tcp_service = true enable_selinux = false enable_tls_streaming = false ignore_image_defined_volumes = false max_concurrent_downloads = 3 max_container_log_line_size = 16384 netns_mounts_under_state_dir = false restrict_oom_score_adj = false sandbox_image = "k8s.gcr.io/pause:3.5" selinux_category_range = 1024 stats_collect_period = 10 stream_idle_timeout = "4h0m0s" stream_server_address = "127.0.0.1" stream_server_port = "0" systemd_cgroup = false tolerate_missing_hugetlb_controller = true unset_seccomp_profile = "" [plugins."io.containerd.grpc.v1.cri".cni ] bin_dir = "/opt/cni/bin" conf_dir = "/etc/cni/net.d" conf_template = "" max_conf_num = 1 [plugins."io.containerd.grpc.v1.cri".containerd ] default_runtime_name = "runc" disable_snapshot_annotations = true discard_unpacked_layers = false no_pivot = false snapshotter = "overlayfs" [plugins."io.containerd.grpc.v1.cri".containerd.default_runtime ] base_runtime_spec = "" container_annotations = [] pod_annotations = [] privileged_without_host_devices = false runtime_engine = "" runtime_root = "" runtime_type = "" [plugins."io.containerd.grpc.v1.cri".containerd.default_runtime.options ] [plugins."io.containerd.grpc.v1.cri".containerd.runtimes ] [plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc ] base_runtime_spec = "" container_annotations = [] pod_annotations = [] privileged_without_host_devices = false runtime_engine = "" runtime_root = "" runtime_type = "io.containerd.runc.v2" [plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc.options ] BinaryName = "" CriuImagePath = "" CriuPath = "" CriuWorkPath = "" IoGid = 0 IoUid = 0 NoNewKeyring = false NoPivotRoot = false Root = "" ShimCgroup = "" SystemdCgroup = true [plugins."io.containerd.grpc.v1.cri".containerd.untrusted_workload_runtime ] base_runtime_spec = "" container_annotations = [] pod_annotations = [] privileged_without_host_devices = false runtime_engine = "" runtime_root = "" runtime_type = "" [plugins."io.containerd.grpc.v1.cri".containerd.untrusted_workload_runtime.options ] [plugins."io.containerd.grpc.v1.cri".image_decryption ] key_model = "node" [plugins."io.containerd.grpc.v1.cri".registry ] config_path = "" [plugins."io.containerd.grpc.v1.cri".registry.auths ] [plugins."io.containerd.grpc.v1.cri".registry.configs ] [plugins."io.containerd.grpc.v1.cri".registry.headers ] [plugins."io.containerd.grpc.v1.cri".registry.mirrors ] [plugins."io.containerd.grpc.v1.cri".registry.mirrors."docker.io" ] endpoint = ["https://kvuwuws2.mirror.aliyuncs.com" ] [plugins."io.containerd.grpc.v1.cri".registry.mirrors."k8s.gcr.io" ] endpoint = ["https://registry.aliyuncs.com/k8sxio" ] [plugins."io.containerd.grpc.v1.cri".x509_key_pair_streaming ] tls_cert_file = "" tls_key_file = "" [plugins."io.containerd.internal.v1.opt" ] path = "/opt/containerd" [plugins."io.containerd.internal.v1.restart" ] interval = "10s" [plugins."io.containerd.metadata.v1.bolt" ] content_sharing_policy = "shared" [plugins."io.containerd.monitor.v1.cgroups" ] no_prometheus = false [plugins."io.containerd.runtime.v1.linux" ] no_shim = false runtime = "runc" runtime_root = "" shim = "containerd-shim" shim_debug = false [plugins."io.containerd.runtime.v2.task" ] platforms = ["linux/amd64" ] [plugins."io.containerd.service.v1.diff-service" ] default = ["walking" ] [plugins."io.containerd.snapshotter.v1.aufs" ] root_path = "" [plugins."io.containerd.snapshotter.v1.btrfs" ] root_path = "" [plugins."io.containerd.snapshotter.v1.devmapper" ] async_remove = false base_image_size = "" pool_name = "" root_path = "" [plugins."io.containerd.snapshotter.v1.native" ] root_path = "" [plugins."io.containerd.snapshotter.v1.overlayfs" ] root_path = "" [plugins."io.containerd.snapshotter.v1.zfs" ] root_path = "" [proxy_plugins ] [stream_processors ] [stream_processors."io.containerd.ocicrypt.decoder.v1.tar" ] accepts = ["application/vnd.oci.image.layer.v1.tar+encrypted" ] args = ["--decryption-keys-path" , "/etc/containerd/ocicrypt/keys" ] env = ["OCICRYPT_KEYPROVIDER_CONFIG=/etc/containerd/ocicrypt/ocicrypt_keyprovider.conf" ] path = "ctd-decoder" returns = "application/vnd.oci.image.layer.v1.tar" [stream_processors."io.containerd.ocicrypt.decoder.v1.tar.gzip" ] accepts = ["application/vnd.oci.image.layer.v1.tar+gzip+encrypted" ] args = ["--decryption-keys-path" , "/etc/containerd/ocicrypt/keys" ] env = ["OCICRYPT_KEYPROVIDER_CONFIG=/etc/containerd/ocicrypt/ocicrypt_keyprovider.conf" ] path = "ctd-decoder" returns = "application/vnd.oci.image.layer.v1.tar+gzip" [timeouts ] "io.containerd.timeout.shim.cleanup" = "5s" "io.containerd.timeout.shim.load" = "5s" "io.containerd.timeout.shim.shutdown" = "3s" "io.containerd.timeout.task.state" = "2s" [ttrpc ] address = "" gid = 0 uid = 0

启动containerd

由于上面我们下载的 containerd 压缩包中包含一个 etc/systemd/system/containerd.service 的文件,这样我们就可以通过 systemd 来配置 containerd 作为守护进程运行了,现在我们就可以启动 containerd 了,直接执行下面的命令即可

1 2 systemctl daemon-reload systemctl enable containerd --now

验证

启动完成后就可以使用 containerd 的本地 CLI 工具 ctr 和 crictl 了,比如查看版本:

1 2 ctr version crictl version

执行后出现如下界面

安装kubeadm

这里我们使用 kubeadm 部署Kubernetes,该操作需要在所有节点执行

添加阿里云镜像源

我们使用阿里云的源进行安装:

1 2 3 4 5 6 7 8 9 cat > /etc/yum.repos.d/kubernetes.repo << EOF [kubernetes] name=Kubernetes baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64 enabled=1 gpgcheck=0 repo_gpgcheck=0 gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg EOF

安装组件

安装kubelat、kubectl、kubeadm,说明:–disableexcludes 禁掉除了kubernetes之外的别的仓库

1 2 3 4 # 因为在线安装速度稍微有点慢 yum makecache fast yum install -y kubelet-1.22.10 kubeadm-1.22.10 kubectl-1.22.10 --disableexcludes=kubernetes kubeadm version

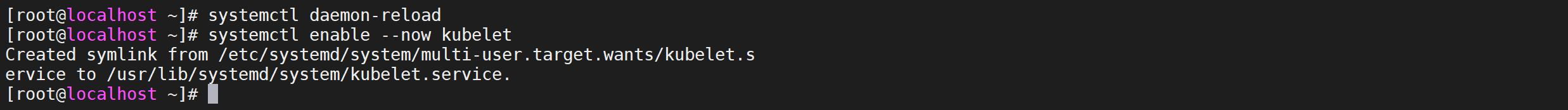

设置开机自启

启动kubeadm,并配置开启启动

1 2 systemctl daemon-reload systemctl enable --now kubelet

验证

执行以下命令进行验证我们的kubeadm是否安装成功

出现如下界面表示已经安装成功

命令自动补全 1 2 3 4 5 6 yum install -y bash-completion source <(crictl completion bash) crictl completion bash >/etc/bash_completion.d/crictl source <(kubectl completion bash) kubectl completion bash >/etc/bash_completion.d/kubectl source /usr/share/bash-completion/bash_completion

提前拉取镜像

因为需要一些镜像,我们需要提前进行拉取

拉取coredns 1 2 ctr -n k8s.io i pull docker.io/coredns/coredns:1.8.4 ctr -n k8s.io i tag docker.io/coredns/coredns:1.8.4 registry.aliyuncs.com/k8sxio/coredns:v1.8.4

拉取pause 1 2 ctr -n k8s.io i pull registry.aliyuncs.com/k8sxio/pause:3.5 ctr -n k8s.io i tag registry.aliyuncs.com/k8sxio/pause:3.5 k8s.gcr.io/pause:3.5

部署K8s-Master

下面的操作只在master节点执行

kubeadm常用命令

命令

效果

kubeadm init

用于搭建控制平面节点

kubeadm join

用于搭建工作节点并将其加入到集群中

kubeadm upgrade

用于升级 Kubernetes 集群到新版本

kubeadm config

如果你使用了 v1.7.x 或更低版本的 kubeadm 版本初始化你的集群,则使用 kubeadm upgrade 来配置你的集群

kubeadm token

用于管理 kubeadm join 使用的令牌

kubeadm reset

用于恢复通过 kubeadm init 或者 kubeadm join 命令对节点进行的任何变更

kubeadm certs

用于管理 Kubernetes 证书

kubeadm kubeconfig

用于管理 kubeconfig 文件

kubeadm version

用于打印 kubeadm 的版本信息

kubeadm alpha

用于预览一组可用于收集社区反馈的特性

编辑配置文件 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 apiVersion: kubeadm.k8s.io/v1beta3 bootstrapTokens: - groups: - system:bootstrappers:kubeadm:default-node-token token: abcdef.0123456789abcdef ttl: 24h0m0s usages: - signing - authentication kind: InitConfiguration localAPIEndpoint: advertiseAddress: 192.168 .245 .151 bindPort: 6443 nodeRegistration: criSocket: /run/containerd/containerd.sock imagePullPolicy: IfNotPresent name: master taints: - effect: "NoSchedule" key: "node-role.kubernetes.io/master" --- apiVersion: kubeproxy.config.k8s.io/v1alpha1 kind: KubeProxyConfiguration mode: ipvs --- apiServer: timeoutForControlPlane: 4m0s apiVersion: kubeadm.k8s.io/v1beta3 certificatesDir: /etc/kubernetes/pki clusterName: kubernetes controllerManager: {}dns: {}etcd: local: dataDir: /var/lib/etcd imageRepository: registry.aliyuncs.com/k8sxio kind: ClusterConfiguration kubernetesVersion: 1.22 .10 networking: dnsDomain: cluster.local serviceSubnet: 10.96 .0 .0 /12 podSubnet: 10.244 .0 .0 /16 scheduler: {}--- apiVersion: kubelet.config.k8s.io/v1beta1 authentication: anonymous: enabled: false webhook: cacheTTL: 0s enabled: true x509: clientCAFile: /etc/kubernetes/pki/ca.crt authorization: mode: Webhook webhook: cacheAuthorizedTTL: 0s cacheUnauthorizedTTL: 0s cgroupDriver: systemd clusterDNS: - 10.96 .0 .10 clusterDomain: cluster.local cpuManagerReconcilePeriod: 0s evictionPressureTransitionPeriod: 0s fileCheckFrequency: 0s healthzBindAddress: 127.0 .0 .1 healthzPort: 10248 httpCheckFrequency: 0s imageMinimumGCAge: 0s kind: KubeletConfiguration logging: {}memorySwap: {}nodeStatusReportFrequency: 0s nodeStatusUpdateFrequency: 0s rotateCertificates: true runtimeRequestTimeout: 0s shutdownGracePeriod: 0s shutdownGracePeriodCriticalPods: 0s staticPodPath: /etc/kubernetes/manifests streamingConnectionIdleTimeout: 0s syncFrequency: 0s volumeStatsAggPeriod: 0s

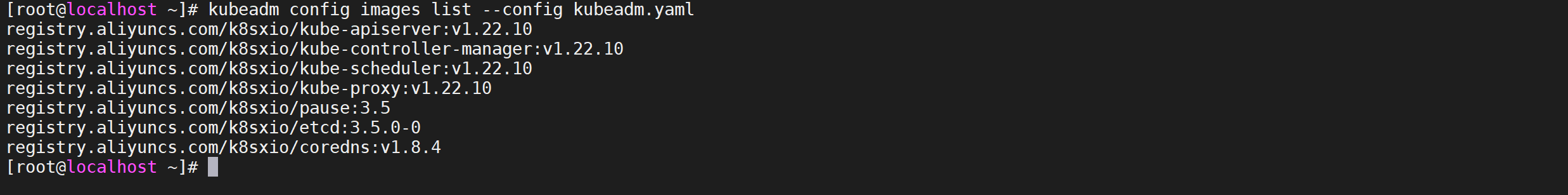

配置镜像

在开始初始化集群之前可以使用 kubeadm config images pull --config kubeadm.yaml 预先在各个服务器节点上拉取所k8s需要的容器镜像。

1 kubeadm config images list --config kubeadm.yaml

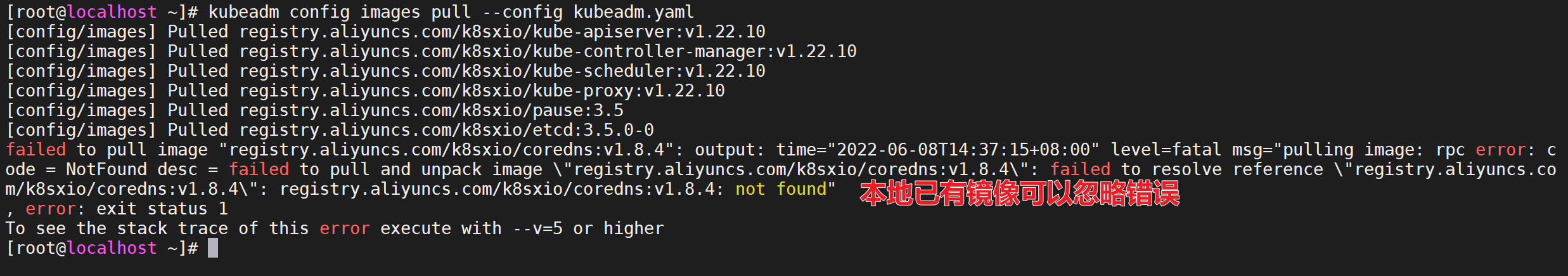

拉取镜像

做了这么多工作就是为了最后的拉取镜像,配置文件准备好过后,可以使用如下命令先将相关镜像 pull下来

1 2 # 速度可能有点慢 kubeadm config images pull --config kubeadm.yaml

因为本地已经有了registry.aliyuncs.com/k8sxio/coredns:v1.8.4镜像,但是注意,如果重新执行拉取命令还是回报错的,这个只要保证本地有镜像,错误可以忽略

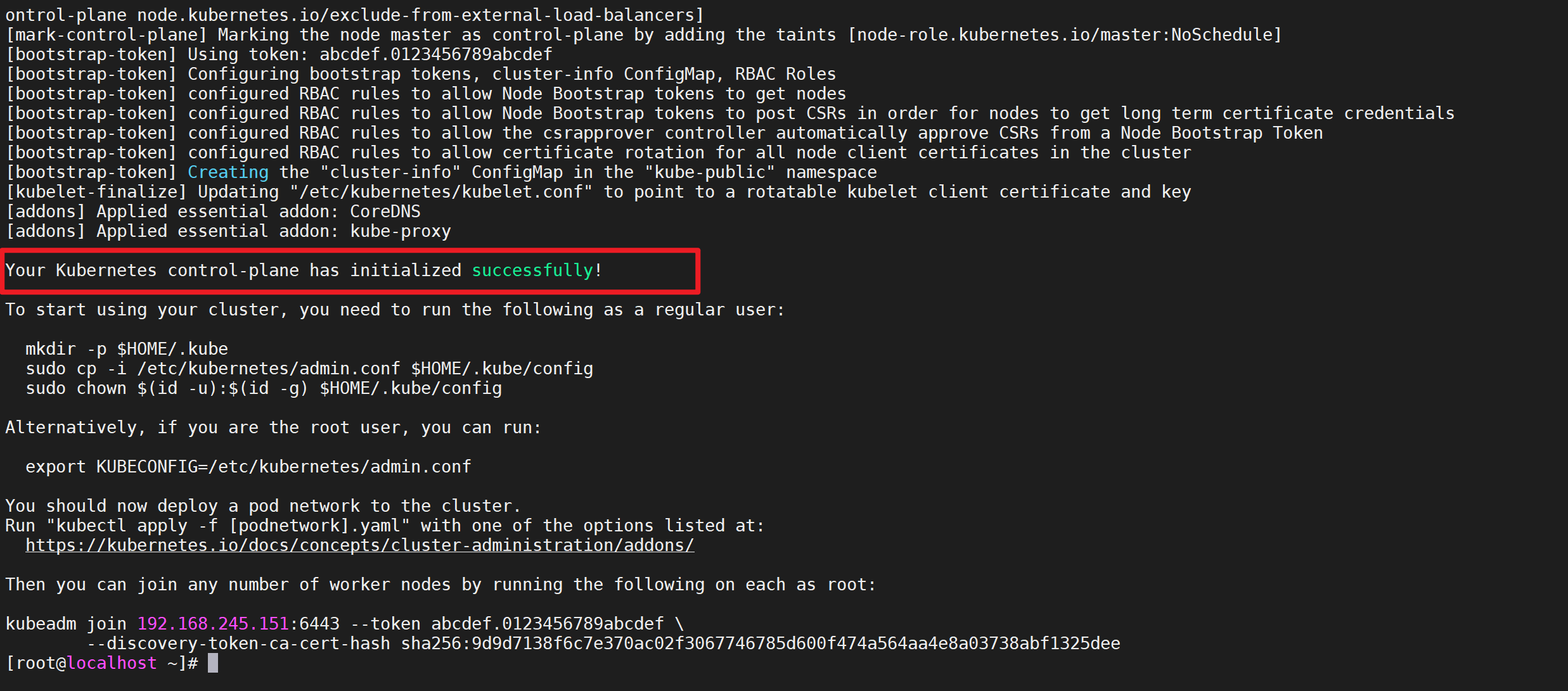

初始化k8s

然后就可以使用上面的配置文件在 master 节点上进行初始化

执行命令

执行命令完成进行初始化工作

1 kubeadm init --config kubeadm.yaml

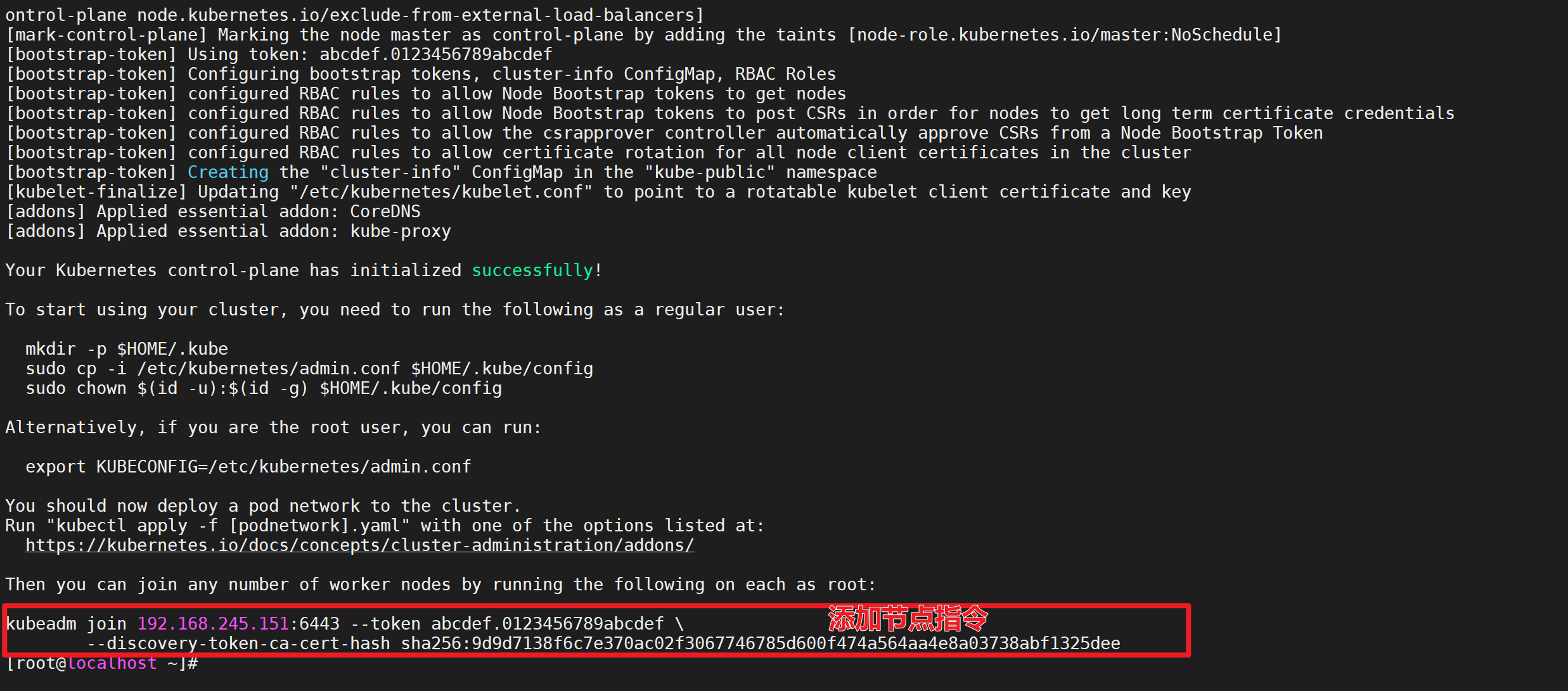

出现如下界面表示我们k8s的master节点已经初始化完成了

执行后续命令

安装成功后,提示我们需要执行一些命令,我们执行以下就好

1 2 3 4 5 mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config # 如果root用户安装k8s需要执行以下命令,如果非root忽略 export KUBECONFIG=/etc/kubernetes/admin.conf

验证

我i们可以执行以下命令验证以下k8s是否安装成功

出现如下界面表示安装成功

添加从节点 找到添加节点信息

记住初始化集群上面的配置和操作要提前做好,将 master 节点上面的 $HOME/.kube/config 文件拷贝到 node 节点对应的文件中,安装 kubeadm、kubelet、kubectl(可选),然后执行上面初始化完成后提示的 join 命令即可:

如果忘记了上面的 join 命令可以使用命令 kubeadm token create --print-join-command 重新获取。

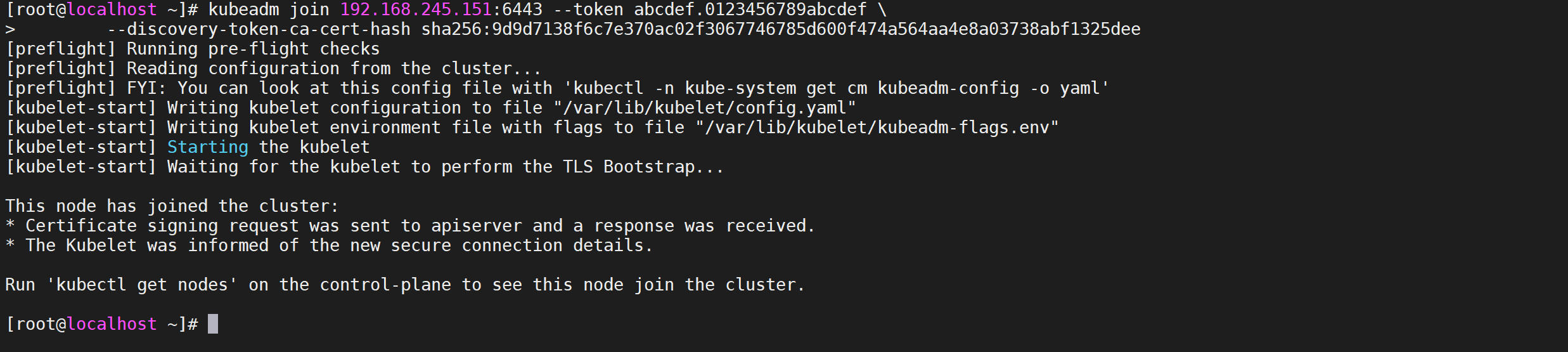

执行命令

在从节点执行相关命令即可,多少个node节点执行多少次

1 2 kubeadm join 192.168.245.151:6443 --token abcdef.0123456789abcdef \ --discovery-token-ca-cert-hash sha256:9d9d7138f6c7e370ac02f3067746785d600f474a564aa4e8a03738abf1325dee

这样我们就加入到了k8s集群了

验证

执行成功后运行 kubectl get node命令

安装网络插件flannel

这个时候其实集群还不能正常使用,因为还没有安装网络插件,接下来安装网络插件

编辑配置文件

我们通过以下配置文件进行创建flannel网络

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 --- apiVersion: policy/v1beta1 kind: PodSecurityPolicy metadata: name: psp.flannel.unprivileged annotations: seccomp.security.alpha.kubernetes.io/allowedProfileNames: docker/default seccomp.security.alpha.kubernetes.io/defaultProfileName: docker/default apparmor.security.beta.kubernetes.io/allowedProfileNames: runtime/default apparmor.security.beta.kubernetes.io/defaultProfileName: runtime/default spec: privileged: false volumes: - configMap - secret - emptyDir - hostPath allowedHostPaths: - pathPrefix: "/etc/cni/net.d" - pathPrefix: "/etc/kube-flannel" - pathPrefix: "/run/flannel" readOnlyRootFilesystem: false runAsUser: rule: RunAsAny supplementalGroups: rule: RunAsAny fsGroup: rule: RunAsAny allowPrivilegeEscalation: false defaultAllowPrivilegeEscalation: false allowedCapabilities: ['NET_ADMIN' , 'NET_RAW' ] defaultAddCapabilities: [] requiredDropCapabilities: [] hostPID: false hostIPC: false hostNetwork: true hostPorts: - min: 0 max: 65535 seLinux: rule: 'RunAsAny' --- kind: ClusterRole apiVersion: rbac.authorization.k8s.io/v1 metadata: name: flannel rules: - apiGroups: ['extensions' ] resources: ['podsecuritypolicies' ] verbs: ['use' ] resourceNames: ['psp.flannel.unprivileged' ] - apiGroups: - "" resources: - pods verbs: - get - apiGroups: - "" resources: - nodes verbs: - list - watch - apiGroups: - "" resources: - nodes/status verbs: - patch --- kind: ClusterRoleBinding apiVersion: rbac.authorization.k8s.io/v1 metadata: name: flannel roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: flannel subjects: - kind: ServiceAccount name: flannel namespace: kube-system --- apiVersion: v1 kind: ServiceAccount metadata: name: flannel namespace: kube-system --- kind: ConfigMap apiVersion: v1 metadata: name: kube-flannel-cfg namespace: kube-system labels: tier: node app: flannel data: cni-conf.json: | { "name": "cbr0", "cniVersion": "0.3.1", "plugins": [ { "type": "flannel", "delegate": { "hairpinMode": true, "isDefaultGateway": true } }, { "type": "portmap", "capabilities": { "portMappings": true } } ] } net-conf.json: | { "Network": "10.244.0.0/16", "Backend": { "Type": "vxlan" } } --- apiVersion: apps/v1 kind: DaemonSet metadata: name: kube-flannel-ds namespace: kube-system labels: tier: node app: flannel spec: selector: matchLabels: app: flannel template: metadata: labels: tier: node app: flannel spec: affinity: nodeAffinity: requiredDuringSchedulingIgnoredDuringExecution: nodeSelectorTerms: - matchExpressions: - key: kubernetes.io/os operator: In values: - linux hostNetwork: true priorityClassName: system-node-critical tolerations: - operator: Exists effect: NoSchedule serviceAccountName: flannel initContainers: - name: install-cni-plugin image: rancher/mirrored-flannelcni-flannel-cni-plugin:v1.2 command: - cp args: - -f - /flannel - /opt/cni/bin/flannel volumeMounts: - name: cni-plugin mountPath: /opt/cni/bin - name: install-cni image: quay.io/coreos/flannel:v0.15.0 command: - cp args: - -f - /etc/kube-flannel/cni-conf.json - /etc/cni/net.d/10-flannel.conflist volumeMounts: - name: cni mountPath: /etc/cni/net.d - name: flannel-cfg mountPath: /etc/kube-flannel/ containers: - name: kube-flannel image: quay.io/coreos/flannel:v0.15.0 command: - /opt/bin/flanneld args: - --ip-masq - --kube-subnet-mgr resources: requests: cpu: "100m" memory: "50Mi" limits: cpu: "100m" memory: "50Mi" securityContext: privileged: false capabilities: add: ["NET_ADMIN" , "NET_RAW" ] env: - name: POD_NAME valueFrom: fieldRef: fieldPath: metadata.name - name: POD_NAMESPACE valueFrom: fieldRef: fieldPath: metadata.namespace volumeMounts: - name: run mountPath: /run/flannel - name: flannel-cfg mountPath: /etc/kube-flannel/ volumes: - name: run hostPath: path: /run/flannel - name: cni-plugin hostPath: path: /opt/cni/bin - name: cni hostPath: path: /etc/cni/net.d - name: flannel-cfg configMap: name: kube-flannel-cfg

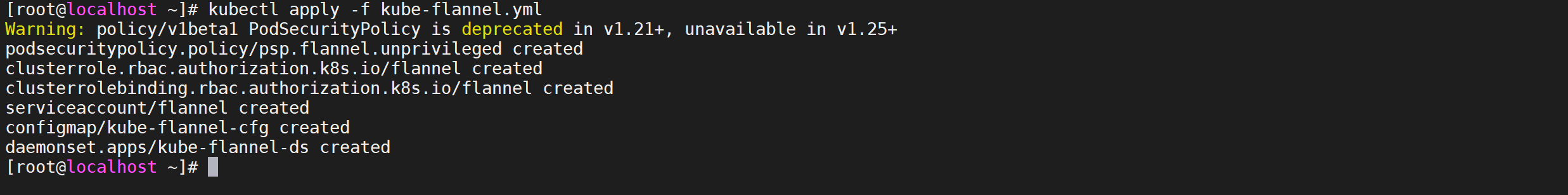

运行网络

这个文件不用修改什么,直接apply即可

1 kubectl apply -f kube-flannel.yml # 安装 flannel 网络插件

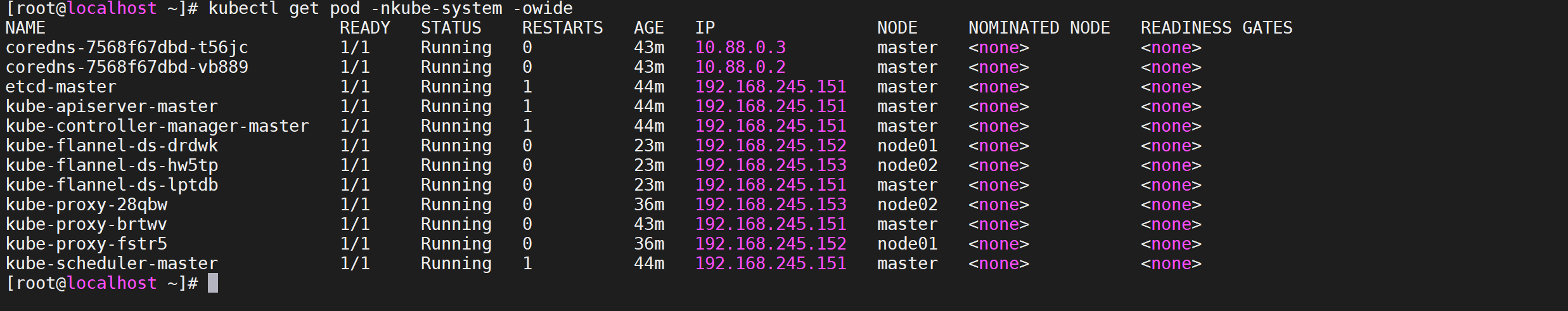

查看pod状态

我们通过如下命令查看下pod的状态

1 kubectl get pod -nkube-system -owide

我们发现所有节点都是正常的就代表已经安装成功了

安装Dashboard

v1.22.10 版本的集群需要安装最新的 2.0+ 版本的 Dashboard,需要在master节点执行

编辑配置文件

修改Service增加 type: NodePort 的配置,该配置已经配置完成可以直接使用

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260 261 262 263 264 265 266 267 268 269 270 271 272 273 274 275 276 277 278 279 280 281 282 283 284 285 286 287 288 289 290 291 292 293 294 295 296 297 298 299 300 301 302 303 apiVersion: v1 kind: Namespace metadata: name: kubernetes-dashboard --- apiVersion: v1 kind: ServiceAccount metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kubernetes-dashboard --- kind: Service apiVersion: v1 metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kubernetes-dashboard spec: ports: - port: 443 targetPort: 8443 selector: k8s-app: kubernetes-dashboard type: NodePort --- apiVersion: v1 kind: Secret metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard-certs namespace: kubernetes-dashboard type: Opaque --- apiVersion: v1 kind: Secret metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard-csrf namespace: kubernetes-dashboard type: Opaque data: csrf: "" --- apiVersion: v1 kind: Secret metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard-key-holder namespace: kubernetes-dashboard type: Opaque --- kind: ConfigMap apiVersion: v1 metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard-settings namespace: kubernetes-dashboard --- kind: Role apiVersion: rbac.authorization.k8s.io/v1 metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kubernetes-dashboard rules: - apiGroups: ["" ] resources: ["secrets" ] resourceNames: ["kubernetes-dashboard-key-holder" , "kubernetes-dashboard-certs" , "kubernetes-dashboard-csrf" ] verbs: ["get" , "update" , "delete" ] - apiGroups: ["" ] resources: ["configmaps" ] resourceNames: ["kubernetes-dashboard-settings" ] verbs: ["get" , "update" ] - apiGroups: ["" ] resources: ["services" ] resourceNames: ["heapster" , "dashboard-metrics-scraper" ] verbs: ["proxy" ] - apiGroups: ["" ] resources: ["services/proxy" ] resourceNames: ["heapster" , "http:heapster:" , "https:heapster:" , "dashboard-metrics-scraper" , "http:dashboard-metrics-scraper" ] verbs: ["get" ] --- kind: ClusterRole apiVersion: rbac.authorization.k8s.io/v1 metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard rules: - apiGroups: ["metrics.k8s.io" ] resources: ["pods" , "nodes" ] verbs: ["get" , "list" , "watch" ] --- apiVersion: rbac.authorization.k8s.io/v1 kind: RoleBinding metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kubernetes-dashboard roleRef: apiGroup: rbac.authorization.k8s.io kind: Role name: kubernetes-dashboard subjects: - kind: ServiceAccount name: kubernetes-dashboard namespace: kubernetes-dashboard --- apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRoleBinding metadata: name: kubernetes-dashboard roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: kubernetes-dashboard subjects: - kind: ServiceAccount name: kubernetes-dashboard namespace: kubernetes-dashboard --- kind: Deployment apiVersion: apps/v1 metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kubernetes-dashboard spec: replicas: 1 revisionHistoryLimit: 10 selector: matchLabels: k8s-app: kubernetes-dashboard template: metadata: labels: k8s-app: kubernetes-dashboard spec: containers: - name: kubernetes-dashboard image: kubernetesui/dashboard:v2.4.0 imagePullPolicy: Always ports: - containerPort: 8443 protocol: TCP args: - --auto-generate-certificates - --namespace=kubernetes-dashboard volumeMounts: - name: kubernetes-dashboard-certs mountPath: /certs - mountPath: /tmp name: tmp-volume livenessProbe: httpGet: scheme: HTTPS path: / port: 8443 initialDelaySeconds: 30 timeoutSeconds: 30 securityContext: allowPrivilegeEscalation: false readOnlyRootFilesystem: true runAsUser: 1001 runAsGroup: 2001 volumes: - name: kubernetes-dashboard-certs secret: secretName: kubernetes-dashboard-certs - name: tmp-volume emptyDir: {} serviceAccountName: kubernetes-dashboard nodeSelector: "kubernetes.io/os": linux tolerations: - key: node-role.kubernetes.io/master effect: NoSchedule --- kind: Service apiVersion: v1 metadata: labels: k8s-app: dashboard-metrics-scraper name: dashboard-metrics-scraper namespace: kubernetes-dashboard spec: ports: - port: 8000 targetPort: 8000 selector: k8s-app: dashboard-metrics-scraper --- kind: Deployment apiVersion: apps/v1 metadata: labels: k8s-app: dashboard-metrics-scraper name: dashboard-metrics-scraper namespace: kubernetes-dashboard spec: replicas: 1 revisionHistoryLimit: 10 selector: matchLabels: k8s-app: dashboard-metrics-scraper template: metadata: labels: k8s-app: dashboard-metrics-scraper spec: securityContext: seccompProfile: type: RuntimeDefault containers: - name: dashboard-metrics-scraper image: kubernetesui/metrics-scraper:v1.0.7 ports: - containerPort: 8000 protocol: TCP livenessProbe: httpGet: scheme: HTTP path: / port: 8000 initialDelaySeconds: 30 timeoutSeconds: 30 volumeMounts: - mountPath: /tmp name: tmp-volume securityContext: allowPrivilegeEscalation: false readOnlyRootFilesystem: true runAsUser: 1001 runAsGroup: 2001 serviceAccountName: kubernetes-dashboard nodeSelector: "kubernetes.io/os": linux tolerations: - key: node-role.kubernetes.io/master effect: NoSchedule volumes: - name: tmp-volume emptyDir: {}

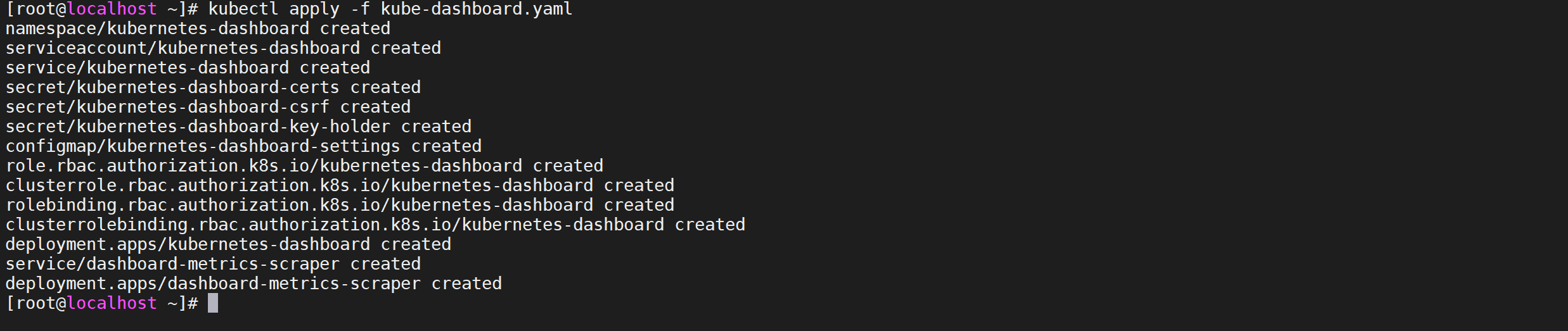

部署Dashboard

在 YAML 文件中可以看到新版本 Dashboard 集成了一个 metrics-scraper 的组件,可以通过 Kubernetes 的 Metrics API 收集一些基础资源的监控信息,并在 web 页面上展示,所以要想在页面上展示监控信息就需要提供 Metrics API,比如安装 Metrics Server

1 kubectl apply -f kube-dashboard.yaml

新版本的 Dashboard 会被默认安装在 kubernetes-dashboard 这个命名空间下面:

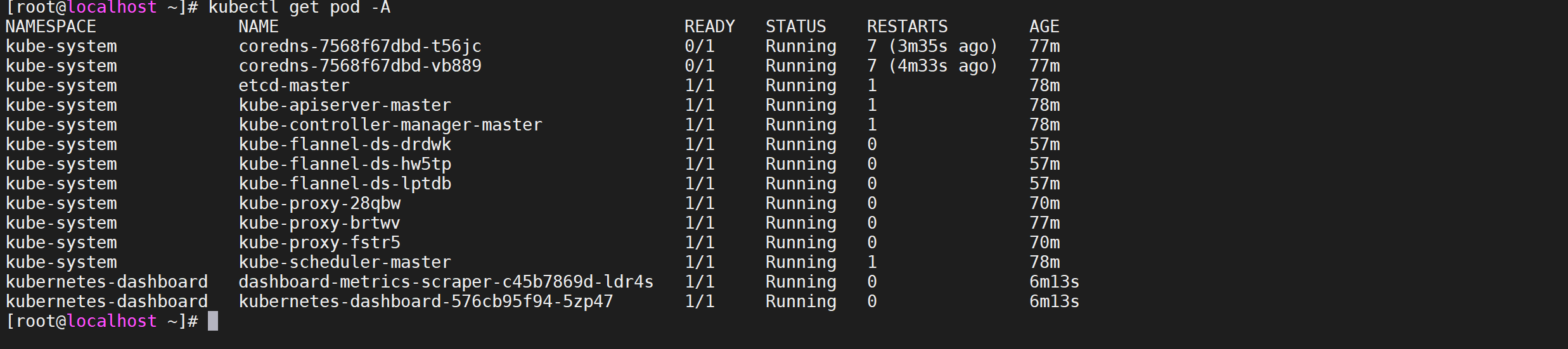

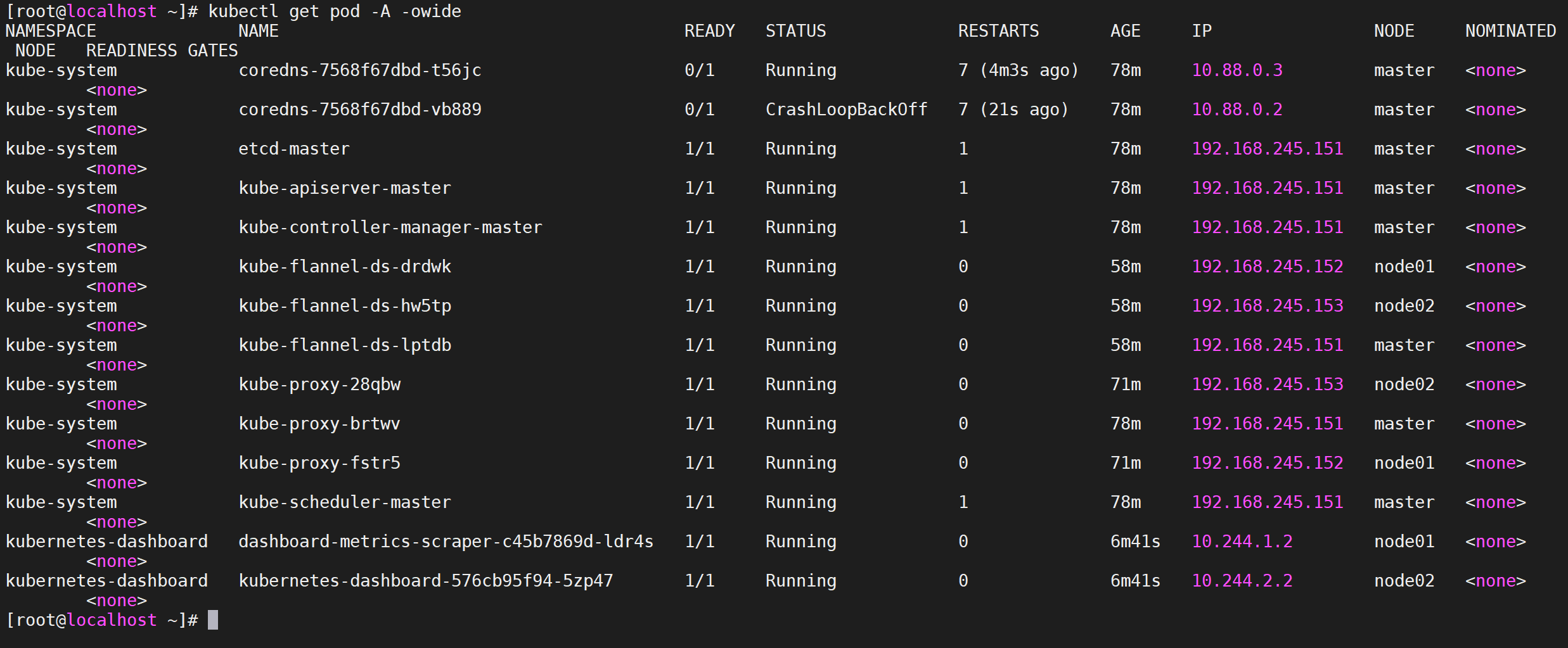

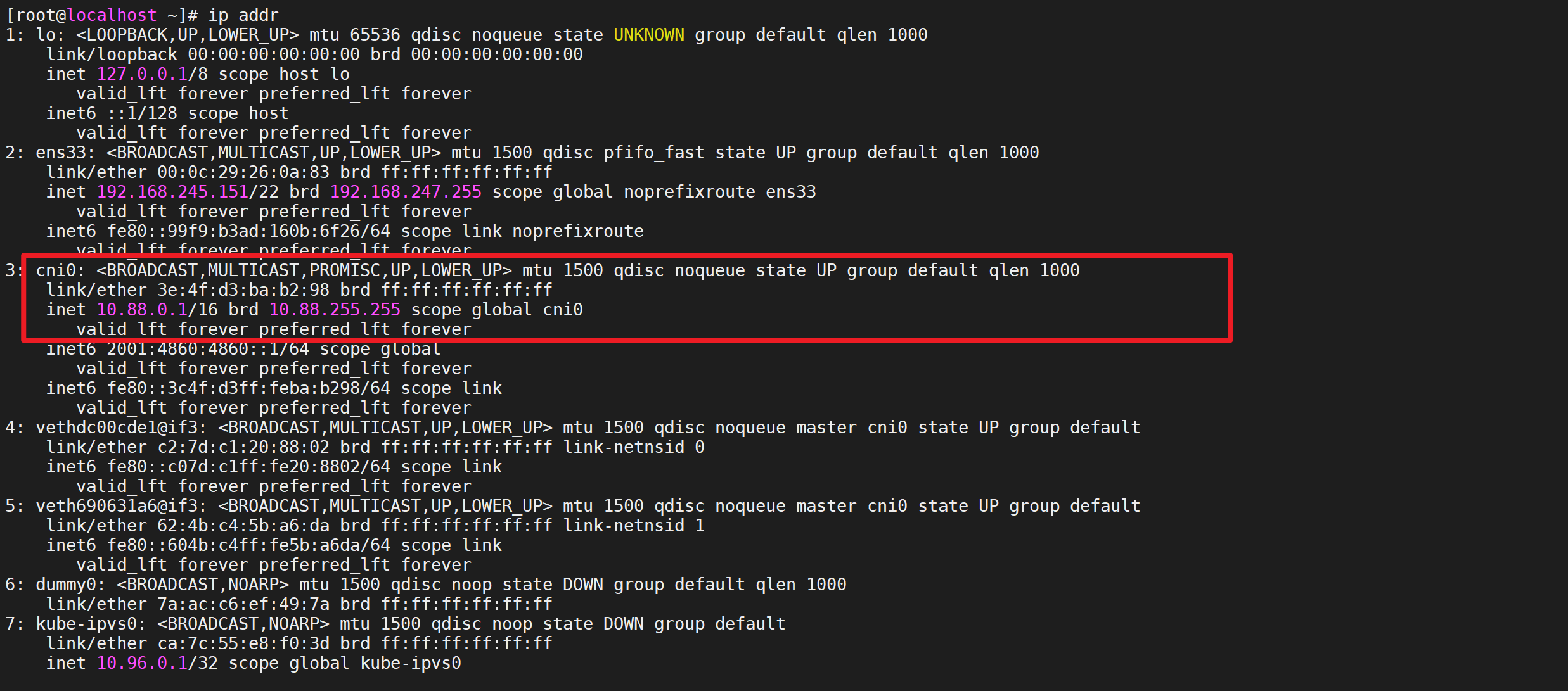

配置CNI

我们仔细看可以发现上面的 Pod 分配的 IP 段是 10.88.xx.xx,包括前面自动安装的 CoreDNS 也是如此,我们前面不是配置的 podSubnet 为 10.244.0.0/16 吗?

1 kubectl get pod -A -o wide

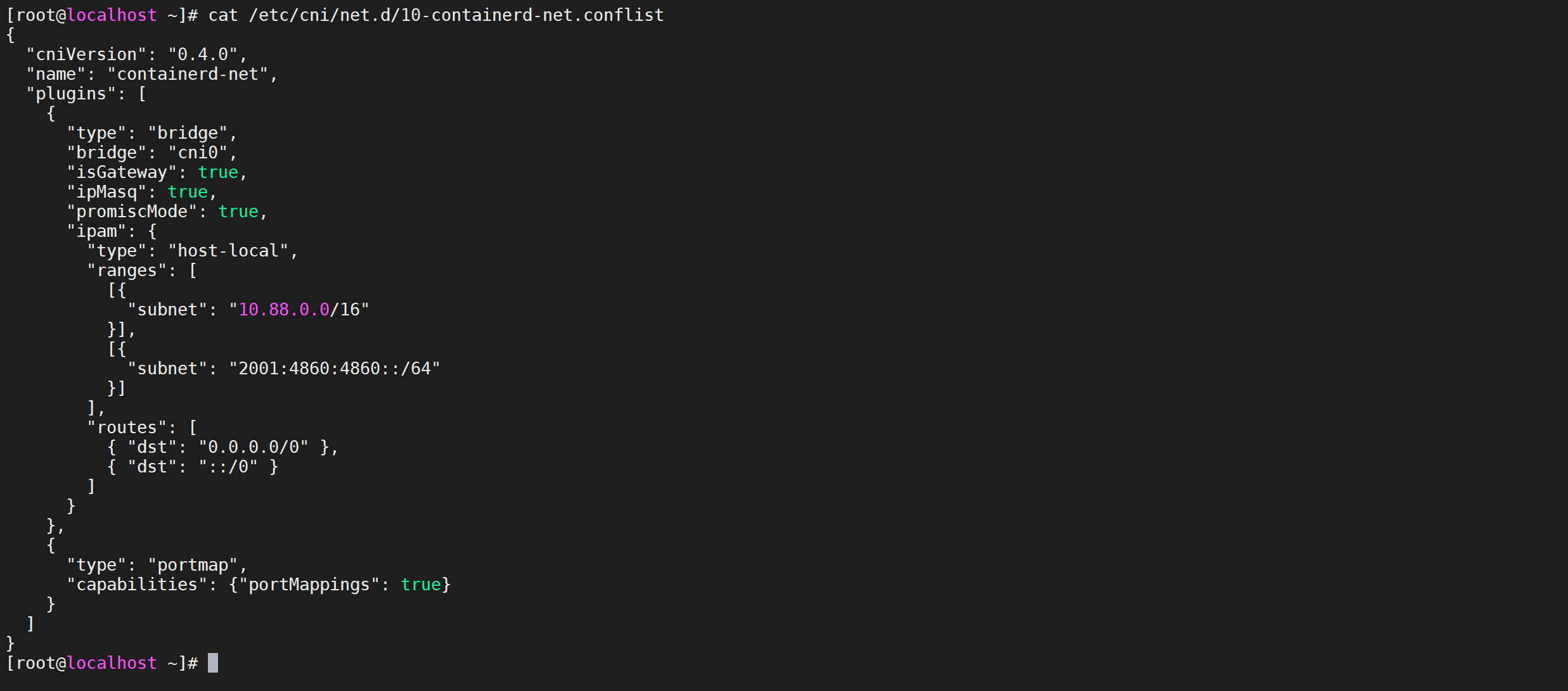

查看CNI 配置

我们先去查看下 CNI 的配置文件

可以看到里面包含两个配置,一个是 10-containerd-net.conflist,另外一个是我们上面创建的 Flannel 网络插件生成的配置,我们的需求肯定是想使用 Flannel 的这个配置,我们可以查看下 containerd 这个自带的 cni 插件配置:

1 cat /etc/cni/net.d/10-containerd-net.conflist

可以看到上面的 IP 段恰好就是 10.88.0.0/16,但是这个 cni 插件类型是 bridge 网络,网桥的名称为 cni0:

配置CNI

该操作只在node节点进行操作

但是使用 bridge 网络的容器无法跨多个宿主机进行通信,跨主机通信需要借助其他的 cni 插件,比如上面我们安装的 Flannel,或者 Calico 等等,由于我们这里有两个 cni 配置,所以我们需要将 10-containerd-net.conflist 这个配置删除,因为如果这个目录中有多个 cni 配置文件,kubelet 将会使用按文件名的字典顺序排列的第一个作为配置文件,所以前面默认选择使用的是 containerd-net 这个插件

按删除方法把所有的节点都节点也配置下

1 2 3 4 mv /etc/cni/net.d/10-containerd-net.conflist{,.bak} ifconfig cni0 down && ip link delete cni0 systemctl daemon-reload systemctl restart containerd kubelet

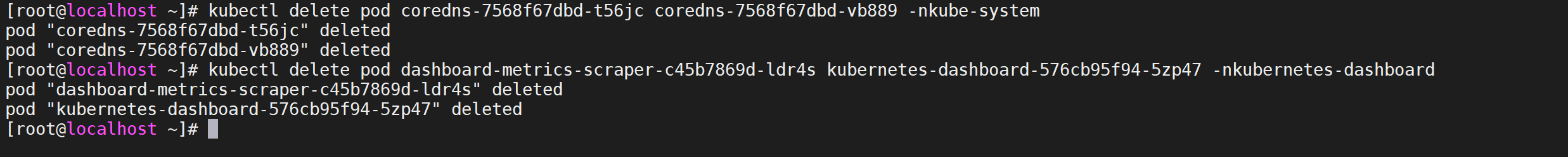

重建POD

然后记得重建 coredns 和 dashboard 的 Pod,重建后 Pod 的 IP 地址就正常了:

1 2 kubectl delete pod coredns-7568f67dbd-t56jc coredns-7568f67dbd-vb889 -nkube-system kubectl delete pod dashboard-metrics-scraper-c45b7869d-ldr4s kubernetes-dashboard-576cb95f94-5zp47 -nkubernetes-dashboard

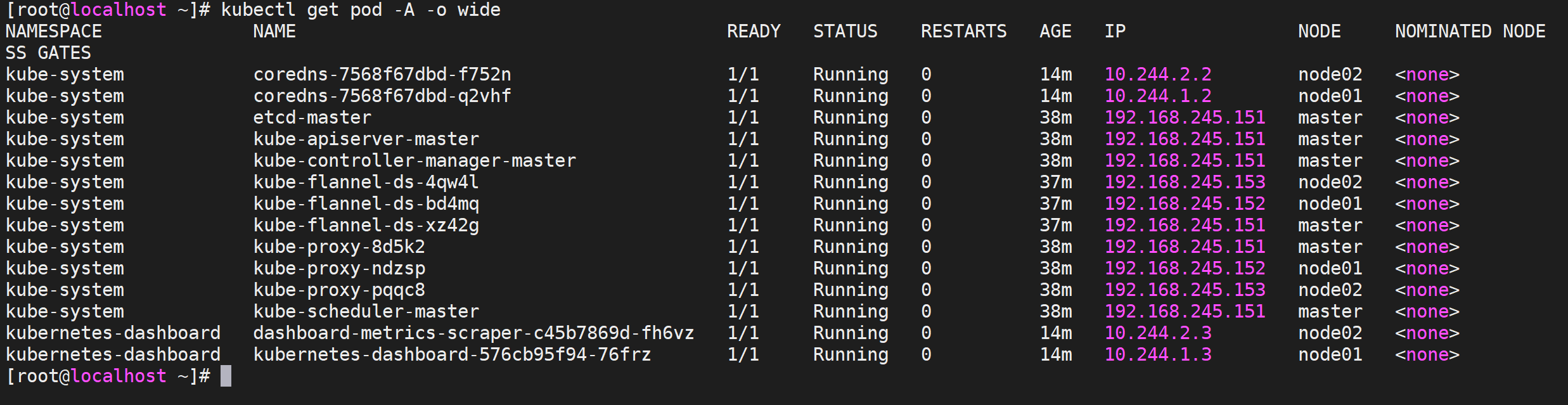

查看Pod 1 kubectl get pod -A -o wide

我们发现所有的节点都是正常状态

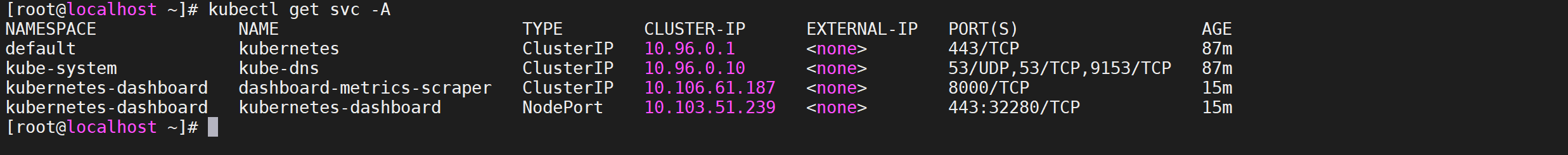

查看Dashboard 端口

查看 Dashboard 的 NodePort 端口

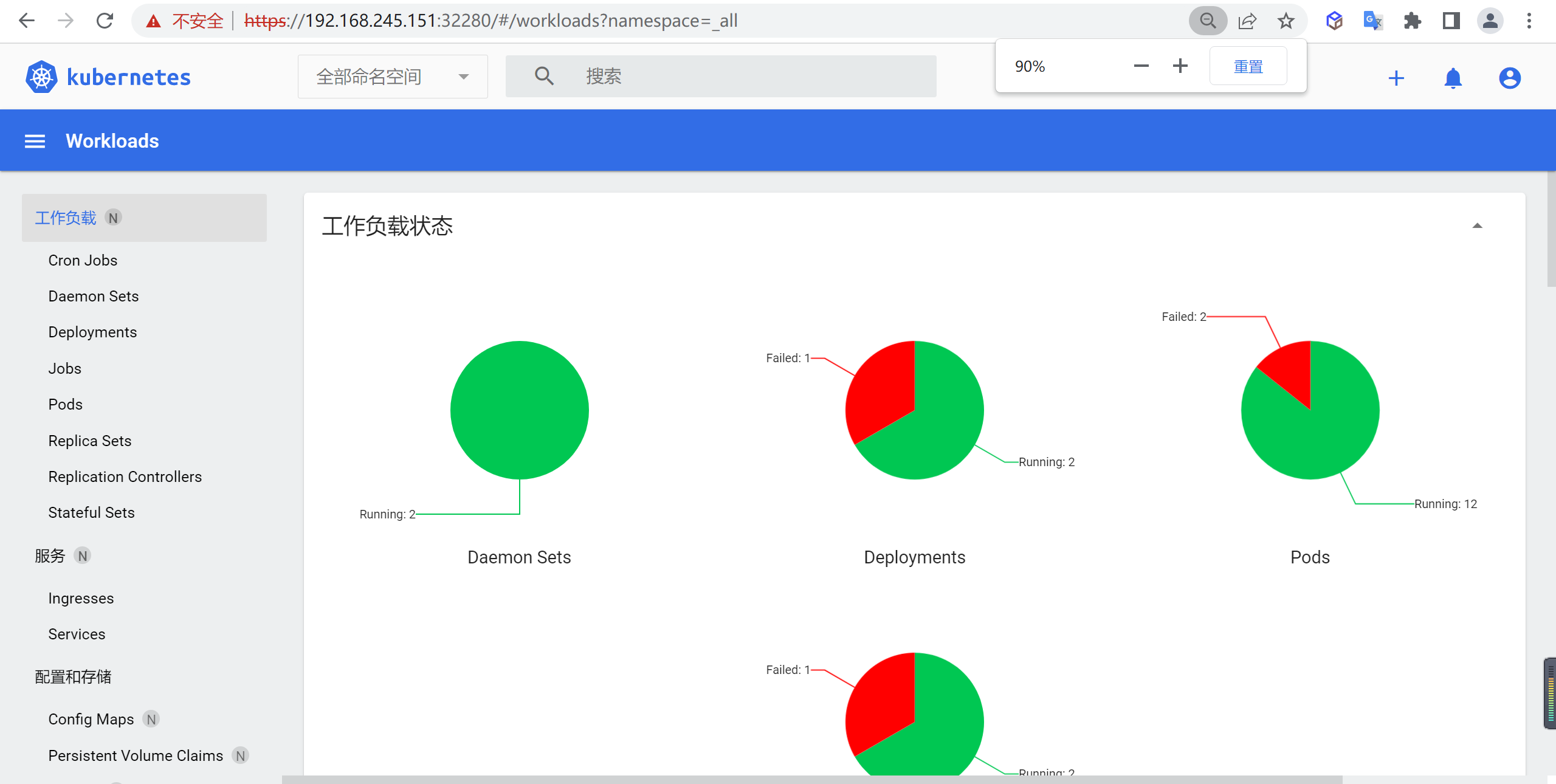

访问Dashboard 打开访问页面

然后可以通过上面的 32280端口去访问 Dashboard,要记住使用 https,Chrome 不生效可以使用Firefox 测试,如果没有 Firefox 下面打不开页面,可以点击下页面中的信任证书即可:

1 https://192.168.245.151:32280/

信任后就可以访问到 Dashboard 的登录页面了:

创建访问用户

然后创建一个具有全局所有权限的用户来登录 Dashboard:(admin.yaml)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 kind: ClusterRoleBinding apiVersion: rbac.authorization.k8s.io/v1 metadata: name: admin roleRef: kind: ClusterRole name: cluster-admin apiGroup: rbac.authorization.k8s.io subjects: - kind: ServiceAccount name: admin namespace: kubernetes-dashboard --- apiVersion: v1 kind: ServiceAccount metadata: name: admin namespace: kubernetes-dashboard

直接应用即可

1 kubectl apply -f admin.yaml

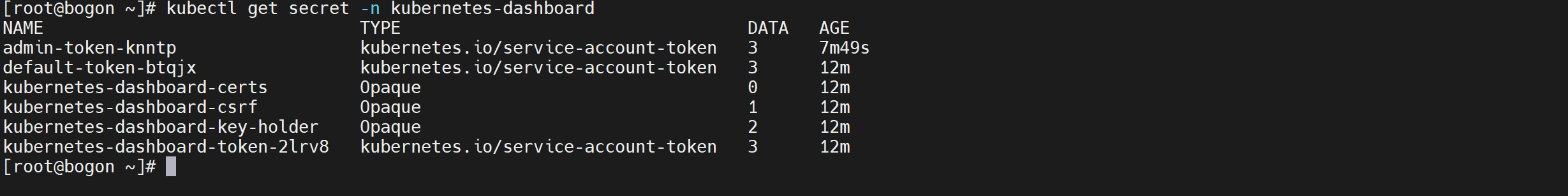

查看secret对象 1 kubectl get secret -n kubernetes-dashboard

我们是以admin开头的,所有找到以admin开头的secret即可

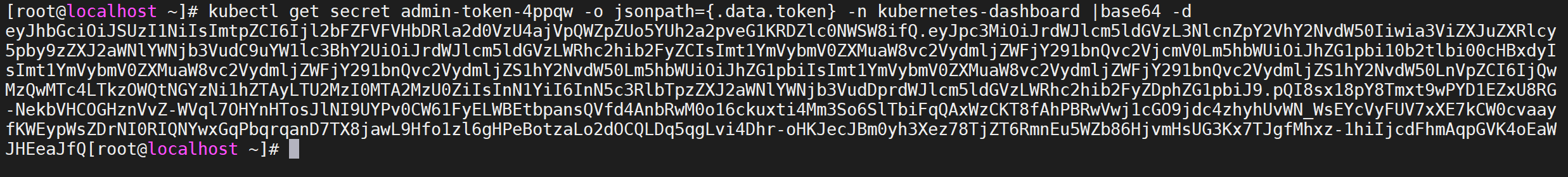

生成密钥

会生成一串很长的base64后的字符串

1 kubectl get secret admin-token-4ppqw -o jsonpath={.data.token} -n kubernetes-dashboard |base64 -d

输入密钥

然后用上面的 base64 解码后的字符串作为 token 登录 Dashboard 即可,新版本还新增了一个暗黑模式:

点击登录就会登录进去